Case study: Federal government

Data-driven program evaluation leads to breakthrough results

The client’s perspective

Amid operational constraints brought on by the pandemic, and in response to Government Accountability Office (GAO) recommendations, a large regulatory organization responsible for global industry oversight had to rethink the way they execute their mission. Presented with two recommended alternatives for oversight, the organization needed to conduct a thorough program evaluation study to determine the best path forward.

Comprehensive program evaluation is vital for policymakers, as a large population of citizens are subject to the rules, regulations, and guidance developed. As such, there is little room for error, and decision-making requires evidence-based strategies for change. The organization called on Eagle Hill Consulting to complete a pilot program evaluation and provide recommendations for the program’s viability and implementation.

A new view

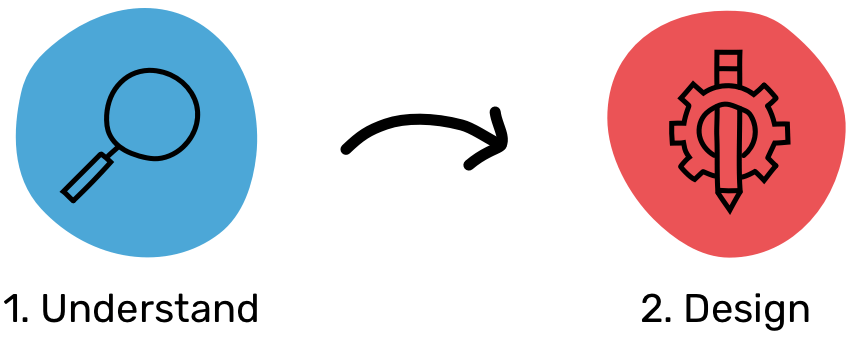

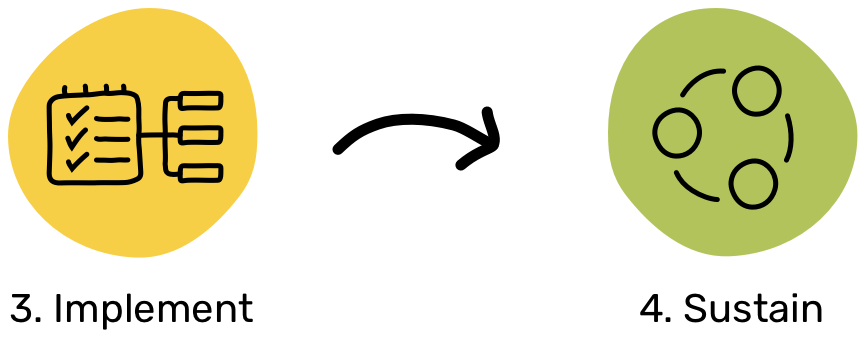

Eagle Hill deployed a four-phase, systematic approach to maintain progress and momentum, while also safeguarding rigorous program evaluation services.

- Understand. Our team assessed the current state and business needs to identify strengths, gaps, dependencies, and opportunities for improvement. Activities in this phase included current-state process analysis, risk analysis, and stakeholder engagement and analysis.

- Design. Next, we incorporated a comprehensive organizational viewpoint by engaging subject matter experts (SMEs) within the organization to glean in-use best practices, lessons learned, and innovations, and to develop frameworks addressing both business and customer needs through a quantitative and qualitative lens.

- Implement. Our team coordinated with leadership, stakeholders, and staff to implement solutions. To garner buy-in and ensure the effectiveness of the solutions, our team completed a series of pilot experiments, before incorporating improvements into a larger roll-out across the organization. In this phase, we identified key performance indicators (KPIs) for the assessment and developed business intelligence dashboards to monitor improvement and provide key insights into adoption and engagement across the organization.

- Sustain. Our team developed recommendations grounded in data to translate outcomes into everyday practice. Through governance and performance reports, we facilitated real-time decision-making to guide and sustain implementation.

How we got there

Eagle Hill brought an unconventional lens to the process and was able to mobilize the organization’s workforce by:

Conducting comprehensive literature and data reviews to inform future decision-making. Our team conducted research across a multitude of sources and identified potential advantages and disadvantages of the existing and available methods for oversight. We applied statistical modeling and analysis methods to identify and evaluate data collected via IT systems, analyzing across a dozen pilot program parameters to set historical benchmarks. This analysis included a five-year review of regulatory data and detailed analytical methods combining current best practice approaches with contextual past performance.

Establishing pilot-specific standard operating procedures (SOPs) across 12 processes to document process management methodology and implement pilot-specific process improvements. In collaboration with SMEs, an environmental scan of existing resources and documented changes to the current state business model reflected the desired future state to support pilot implementation. Validating the SOPs with executives and SMEs across 10 offices, our team documented feedback and changes via a change log to safeguard traceability of change. SOPs supported pilot implementation using a business model prototype to prepare staff for pilot launch.

Developing management and pilot monitoring tools to create risk-informed resource allocation that predicts time and level of effort based on current capacities. By assessing historical capacity and resourcing constraints, our team gained insights to inform pilot implementation. We used a risk-based decision framework that incorporated work activities, policy priorities, allocated resources, and calculated regulatory impact and financial estimates. The pilot team created a data visualization dashboard to capture key pilot measures and results of the program efforts. The dashboard included pilot progress, data collection activities, safety metrics, and initial outcomes and provided an easy and effective way for the organization to provide weekly and monthly status updates on the program’s progress.

Providing implementation services to support the execution of the pilot through a performance management implementation plan. Our team conducted pilot-specific training to 300+ staff and delivered an implementation plan including key communications, virtual and interactive trainings, and an interactive tool to track training completion rates. With a continuous improvement framework and well-documented approach, the pilot team produced an evidence-based archival log of 400+ documented artifacts including traceability to each document by name, description, location, and date.

Unconventional consulting—and breakthrough results

With our support, the pilot launched ahead of schedule and the agency is now able to leverage the evaluation model to make data-driven decisions for executing future state operations. In collaboration with key client representatives, our team delivered a comprehensive pilot plan that included a strategic operational plan, prioritization approach, and oversight structure. Further, our team provided a policy effectiveness study, resource allocation tool, and recommendations to the viability and advisability in long-term implementation through an interim report that included data visualizations, continuous improvement recommendations, and an archival plan.